Lesson 4: Kubernetes

Now that you have containerised your application, it's time to sail out to the sea!

Kubernetes, also known as k8s, is an open-source container orchestration system to automate the deployment, scaling, and management of containerised applications. Originally developed at Google, it was released as open source in 2014.

Why Kubernetes?

Kubernetes is the industry’s leading container orchestration platform. It has a strong community and rose to dominance due to its flexibility and rich feature set. In recent years, it has been widely adopted by cloud providers. Major cloud providers like Amazon Web Services, Microsoft Azure, and Google provide fully managed Kubernetes services to run and operate Kubernetes clusters in the cloud. Kubernetes is platform-agnostic - it can run everywhere. It goes hand-in-hand with the cloud because those who build and deploy containerised applications to the cloud need a solution to manage and orchestrate multiple containers.

When there is only a couple of applications, it is easy for DevOps to deploy them manually to multiple hosts using containers. However, when the number starts to grow, it quickly becomes demanding and impractical to manage hundreds or even thousands of containers manually. The large-scale deployments mean that scores of containers have to be started, stopped, and restarted every day. This explains why a container orchestration tool like Kubernetes is a much-needed solution to help organisations run operations. Kubernetes not only allows you to deploy containers at scale (without having to manually track where each is deployed to) but also automates scaling, load-balancing and self-healing. By declaratively specifying the desired number of replicas, Kubernetes automatically scales the number of containers up and down. Kubernetes also ensures a high level of availability. If a container (or pod) fails, it automatically replaces it.

Understand Kubernetes

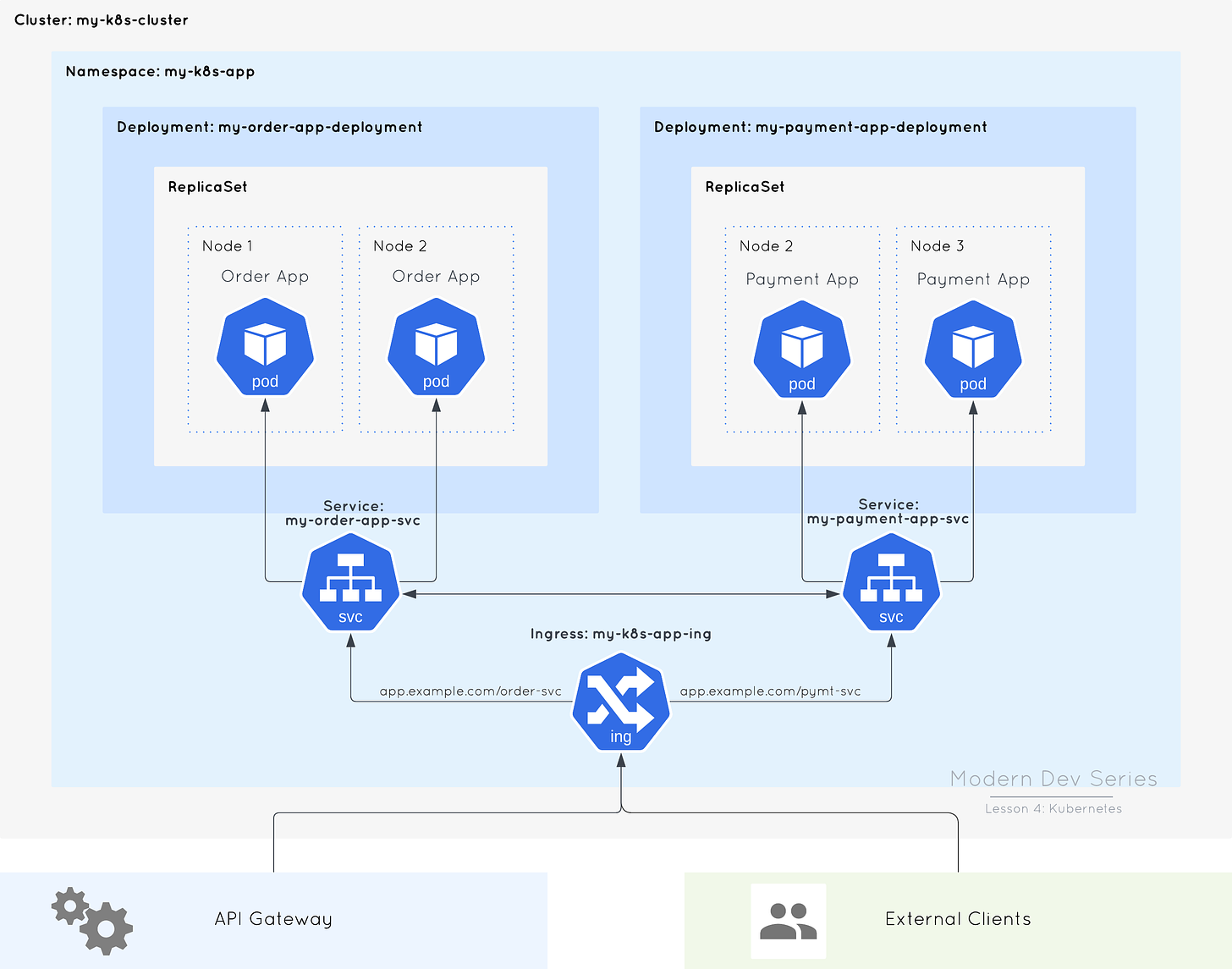

This is a simple topology of Kubernetes workloads in a Kubernetes cluster. A Kubernetes cluster is a set of machines, called nodes, that run the control plane (which controls the cluster) and workloads (applications). In this post, I do not explain the Kubernetes architecture (i.e. the control plane) but focus on how workloads are deployed to a Kubernetes cluster.

Kubernetes uses API to control objects, which in the figure above are Namespaces, Pods, ReplicaSets, Deployments, Services, and Ingresses. These are called the API objects. Let’s understand these API objects.

Pods

A pod is the smallest unit of deployment. Kubernetes does not deploy containers but only pods.

A pod contains one or more containers. Each pod should only run one primary container (the application) but may also run helper containers for additional features like logging, monitoring and security.

Pods are ephemeral. They can be removed and replaced at any time. For example, if a pod or node fails, Kubernetes automatically creates a new one to replace it.

Each pod has its own IP address, which is dynamically assigned and internal to the cluster (you cannot access it from the Internet).

To allow pods (e.g. Order and Payment services) to communicate with each other using a fixed IP address, we must use Service objects.

Usually we do not deploy pods manually but use Deployment objects (higher-level abstraction) to manipulate them.

Deployments

A deployment is the standard way to deploy workloads - it allows you to declare how many replicas of a pod should be running at a time.

A deployment creates a ReplicaSet object to spin up and down the requested number of pods.

A deployment monitors the pods - if a pod dies, it automatically recreates it.

During an update, deployments, by default, use the rolling update strategy by gradually replacing old pods with the new ones (i.e. scaling up the new ReplicaSet while scaling down the old ReplicaSet by the same number of replicas).

Services

A Kubernetes service is a logical group of pods (replicas) running the same application that provides a single IP address to expose a service to internal clients (e.g. pods inside the cluster) or external clients (e.g. end users).

For example, the two pod replicas of Order service are deployed to different nodes in our cluster, but they are backed by a Service object which exposes them to other clients or pods via a single interface (i.e. a virtual IP address).

A Kubernetes service acts like a load balancer, which routes requests to one of the pods sitting behind it.

There are three Service types: ClusterIP, NodePort, and LoadBalancer.

ClusterIP

ClusterIP is the default Service type in Kubernetes. A ClusterIP service exposes your pods as a single service to other clients inside the cluster only. Once created, it is assigned a cluster-internal IP address. There is no external access.

NodePort

A NodePort service allows external access to the service. It opens a port, called the nodePort, in the range of 30000 to 32767, on all nodes in the cluster and binds that port to the service. To access a NodePort service from outside the cluster, we use a node IP address and the service’s nodePort. Any traffic that is sent to this port on any node in the cluster is forwarded to the service. In the real world, this is less commonly used in production.

LoadBalancer

A LoadBalancer service exposes your pods to clients outside the cluster by creating a network load balancer, which load-balances network traffic at L4 of the OSI model. Kubernetes does not provision load balancers. In order to support LoadBalancer service type, your cluster must have a controller, supported by the cloud provider, that provisions network load balancers. For example, if you host your Kubernetes cluster with AWS, you must install AWS Load Balancer Controller add-on on your cluster so that it can create AWS Network Load Balancers. Depending whether the load balancer is internal (private subnet) or external (public subnet), the service is exposed to other clients in a private subnet or to the Internet. Exposing your services this way can be costly as it requires to create a load balancer, and its own public IP address, for each service. In practice, many people use ClusterIP services with Ingresses.

Ingresses

An ingress exposes one or more services externally using an HTTP load balancer, which load-balances application traffic at L7.

An internal or external load balancer must be provisioned to expose the services to external clients in a private subnet or to the Internet.

It is more flexible as it allows you to use path-based or subdomain-based routing to your services. This is similar to a reverse proxy.

By default, only creating an Ingress resource has no effect. You must have an Ingress controller running in your cluster to provision load balancers for Ingress resources. For example, AWS Load Balancer Controller in a cluster automatically provisions an Application Load Balancer when you create an ingress.

Namespace

Namespaces are used to separate resources in a cluster by concern (e.g. application, project, or team).

Namespaces provide a scope for object names. Object names must be unique in a namespace, but not across namespaces.

There are two ways to manage Kubernetes API objects:

Using the command line tool,

kubectl, directly (imperative)Using Kubernetes manifests (declarative)

Using Kubernetes manifests is the recommended way as they can be version-controlled. A Kubernetes manifest is a specification of a Kubernetes object in YAML format that describes the resource you want to create and the desired state of the object that Kubernetes will maintain when you apply it. A detailed discussion of the API object specifications is beyond the scope of this post. However, let’s see how to create a manifest and apply it. You must already have Docker and minikube running on your computer to follow this example.

In this example, we will create a Deployment manifest and use the nginx image to deploy a container in a pod. First, we use kubectl to generate a basic manifest:

$ kubectl create deployment nginx --image=nginx --dry-run=client -o yaml > deploy.yamlThis will output a deploy.yaml file with the following content:

apiVersion: apps/v1

kind: Deployment

metadata:

creationTimestamp: null

labels:

app: nginx

name: nginx

spec:

replicas: 1

selector:

matchLabels:

app: nginx

strategy: {}

template:

metadata:

creationTimestamp: null

labels:

app: nginx

spec:

containers:

- image: nginx

name: nginx

resources: {}

status: {}Now you have a basic manifest to work on and you can start extending it by adding additional fields to the specification. This demo does not show you how to set up an nginx. You can edit the file however you want or leave it untouched. Once you are done, you can apply the manifest using this command:

$ kubectl apply -f deploy.yamlThis will create a Deployment object in the current namespace. To verify the deployment is up and running, use this command:

$ kubectl get deployment nginxIt should show an output like this:

NAME READY UP-TO-DATE AVAILABLE AGE

nginx 1/1 1 1 19sLet’s go back to the manifest and change spec.replicas to 2 and run the kubectl apply command again. This scales the deployment to two pods. Run kubectl get deployment nginx and it should show the following output:

NAME READY UP-TO-DATE AVAILABLE AGE

nginx 2/2 2 2 1m52sLet’s also verify the pods by listing the pods with label selector:

$ kubectl get pods -l=app=nginxIt should print an output like this:

NAME READY STATUS RESTARTS AGE

nginx-748c667d99-lcxbn 1/1 Running 0 1m10s

nginx-748c667d99-lqjlf 1/1 Running 0 2m56sThis demonstrates automated deployment. By specifying the desired number of pod replicas, Kubernetes automatically scales the ReplicaSet and schedules a new pod to run on one of the available nodes in the cluster.

Finally, we can remove the deployment by using the manifest file used to create it:

$ kubectl delete -f deploy.yamlKubernetes for developers

In the previous section, I covered the fundamentals of Kubernetes by introducing the basic concepts and showing you how applications are deployed to Kubernetes. I should also quickly mention other crucial Kubernetes concepts that developers will come across in their day-to-day job - Volumes, ConfigMaps and Secrets.

Volumes

Docker uses volumes to mount external storage for persisting data generated by containers and sharing data between machines. Similarly, Kubernetes lets you mount volumes into a pod for data persistence and sharing data across pods.

A pod can have multiple volumes, and each container running in it can mount any number of these volumes in different locations.

Volumes are ephemeral - they are destroyed when a pod ceases to exist. If you need to preserve data beyond a pod’s lifetime, use persistent volumes.

Common use cases for persistent volumes are providing storage for application logs, user-generated files, and database applications.

ConfigMaps

ConfigMaps are used to store configuration (non-confidential) data in key-value pairs in Kubernetes for pods to consume.

Because applications usually require externalised configuration be injected into a container, ConfigMaps provide a way to inject configuration data into a pod. They are a great way to separate configuration data from application code.

ConfigMaps can be used as a data source to pass command-line arguments or environment variables to a container. They can also be mounted as a volume.

When mounted as a volume, projected keys are automatically updated when their ConfigMaps are updated. It is up to the application to detect the changes in the mounted volume and reload the configuration data as necessary.

Secrets

Secrets are objects used to store sensitive data such as passwords, SSH keys, TLS secrets, and tokens in key-value pairs in Kubernetes.

Like ConfigMaps, secrets are injected into pods as environment variables or through a volume mount. It may not be the best idea to inject secrets into environment variables as applications typically print out environment variables in application logs, which inadvertently exposes or leaks the secrets.

Unlike ConfigMaps, Kubernetes handles secrets in specific ways to provide additional protection. For example, secrets are only sent to nodes running pods that require them and stored into a

tmpfswhich stores its data in memory only.Secrets store data in Base64-encoded format (not encryption), which makes it able to support binary data. Since secrets are not encrypted, they cannot be stored in a version control system. Thus, it is not recommended to use manifests for secrets.

Secrets are, by default, stored unencrypted in Kubernetes. Anyone with API access or authorised to create pods in a namespace can access or read the secrets.

Kubernetes is simple to use but hard to manage. Unless you have a highly skilled team to run Kubernetes on your own infrastructure, you will have a better chance of getting off on the right foot if you run Kubernetes in the cloud. Cloud providers usually provide managed Kubernetes services to offload operational tasks from their users. These platforms allow you to quickly deploy an enterprise-grade Kubernetes cluster on their fully managed cloud infrastructure so that your team can focus on building and deploying applications and spend less time on cluster management. To run a managed Kubernetes cluster in the cloud, you can choose from Amazon Elastic Kubernetes Service (Amazon EKS), Azure Kubernetes Service (AKS), and Google Kubernetes Engine (GKE), to name but a few.

Introducing Kubernetes to your organisation comes with initial costs such as training, investing your engineers’ time into learning Kubernetes and requiring extra resources to run a production-ready Kubernetes cluster. Despite all benefits, Kubernetes is no panacea for all corporate DevOps issues. If you are running a monolith or just a couple of microservices, using Kubernetes is simply not worth it. Running Kubernetes requires expertise - it just does not work if you have limited resources in your team. Investment in Kubernetes is most likely to pay off when you use it to automate many application deployments (not just a few) across your organisation to save your DevOps team’s time that would be better spent on more important tasks.

As a developer, you can increase your self-worth by learning Kubernetes, which is seen as a highly sought-after skill by many organisations in today’s job market. As cloud adoption is accelerating in recent years, many companies are desperate for people with cloud knowledge, which usually also demands experience in Kubernetes. Kubernetes.io is a great place to learn Kubernetes. Those who want to establish their credibility in the job market and consider certification as valuable can get certified by taking the Certified Kubernetes Application Developer (CKAD) exam.

Kubernetes is a powerful and sophisticated orchestration platform. For developers, learning more than just the concepts is fundamental to success. For example, one should have practical knowledge or experience of using Kubernetes on a cloud platform (each is slightly different in terms of functionality, command-line interface and management console) and know how to write Kubernetes YAML manifests. Your job may require you to handle deployments, so it is important to familiarise yourself with the manifests and access to the cloud platform that your company is using to deploy Kubernetes workloads. In some organisations, some of these tasks are either automated or assigned to the DevOps team. Even if it is someone else’s job to deploy your application to Kubernetes, you are probably still expected by your employer to own and troubleshoot it. In my career, I have seen teams that failed for the lack of working knowledge of Kubernetes. There is no shortcut to success.